Patient Safety & Quality Improvement

Medical errors, root cause analysis, systems thinking, never events, hospital-acquired conditions, medication safety, transitions of care, surgical safety, infection control, fall prevention, and every framework and intervention used to improve safety across healthcare.

01 Historical Origins & the Safety Movement

Patient safety — the prevention of harm to patients from the processes of healthcare itself — emerged as a distinct discipline in the late 1990s, although its intellectual roots extend to Florence Nightingale's 19th-century hospital sanitation reforms and Ernest Codman's early 20th-century "end result system" advocating for outcome tracking. The modern era of patient safety began with the 1999 Institute of Medicine (IOM) report To Err Is Human: Building a Safer Health System, which estimated that 44,000 to 98,000 Americans died each year from preventable medical errors — more than from motor vehicle accidents, breast cancer, or AIDS. The report transformed safety from an individual-blame problem into a systems-engineering problem and catalyzed federal investment, regulatory action, and a new generation of safety science.

Preventable harm remains a leading cause of death in hospitalized patients. Every physician, nurse, pharmacist, and trainee practices inside a complex sociotechnical system where small design failures propagate into injuries. Understanding the origins, vocabulary, and frameworks of patient safety is the foundation for every quality-improvement activity, every morbidity and mortality conference, and every regulatory survey encountered in clinical practice.

Landmark Reports & Initiatives

| Year | Report / Initiative | Key Contribution |

|---|---|---|

| 1999 | To Err Is Human (IOM) | Estimated 44,000–98,000 preventable deaths annually; reframed error as systems failure rather than individual blame |

| 2001 | Crossing the Quality Chasm (IOM) | Defined six aims of quality: Safe, Timely, Effective, Efficient, Equitable, Patient-centered (STEEEP) |

| 2003 | IOM: Health Professions Education | Called for training in quality improvement, informatics, and interdisciplinary teamwork |

| 2004 | WHO World Alliance for Patient Safety | Launched global safety challenges, starting with hand hygiene ("Clean Care is Safer Care") |

| 2005 | 100,000 Lives Campaign (IHI) | First large-scale collaborative to reduce preventable deaths through six evidence-based interventions |

| 2006 | 5 Million Lives Campaign (IHI) | Expanded national effort to prevent medical harm using bundles and rapid-response teams |

| 2008 | Triple Aim (Berwick et al., IHI) | Simultaneously improve population health, patient experience, and cost per capita |

| Year | Report / Initiative | Key Contribution |

|---|---|---|

| 2011 | CMS Partnership for Patients | Targeted 40% reduction in hospital-acquired conditions and 20% reduction in readmissions |

| 2014 | Quadruple Aim (Bodenheimer & Sinsky) | Added clinician well-being to the Triple Aim, recognizing burnout as a safety threat |

| 2015 | Make It Zero / Zero Harm movement | Goal of eliminating all preventable harm rather than reducing it incrementally |

| 2016 | Johns Hopkins BMJ analysis (Makary & Daniel) | Estimated medical error as the third leading cause of US death (>250,000/year) |

| 2019 | WHO Global Patient Safety Action Plan 2021–2030 | Framework for eliminating avoidable harm in healthcare worldwide |

The Shift from Blame to System

Before To Err Is Human, medical culture responded to errors by identifying the individual clinician "at fault," followed by reprimand, retraining, or litigation. This blame-and-shame approach suppressed reporting and obscured the upstream system vulnerabilities that made the error possible. Modern safety science, drawn from aviation, nuclear power, and anesthesiology, reframed error as an emergent property of a complex system — the inevitable result of humans working inside imperfect workflows, technology, and organizational structures. The central insight: "Good people, bad systems" produce most medical harm.

The goal of safety work is not to eliminate human error (impossible) but to design systems that tolerate error, catch it before it reaches the patient, and make the safe action the easiest action. Forcing functions, checklists, standardization, and redundancy turn a single lapse into a near-miss instead of a sentinel event.

02 Epidemiology & Cost of Medical Error

Quantifying medical error is difficult because harm is often hidden, attributed to underlying disease, or never reported. Nevertheless, epidemiologic studies using chart review, administrative data, and trigger tools (such as the IHI Global Trigger Tool) consistently show that adverse events affect a large fraction of hospitalized patients and that a significant proportion of those events are preventable.

Key Epidemiologic Estimates

| Source | Finding |

|---|---|

| Harvard Medical Practice Study (1991) | 3.7% of hospitalizations involved adverse events; 27.6% involved negligence |

| To Err Is Human (1999) | 44,000–98,000 preventable deaths per year in US hospitals |

| HealthGrades Patient Safety Study (2004) | ~195,000 annual deaths attributable to in-hospital medical errors |

| OIG Report on Medicare Beneficiaries (2010) | 13.5% of Medicare inpatients experienced an adverse event; 44% deemed preventable |

| Classen et al. (Health Affairs 2011) | IHI Global Trigger Tool detected adverse events in ~33% of admissions — 10× standard voluntary reporting |

| Makary & Daniel (BMJ 2016) | Estimated >250,000 US deaths/year from medical error — third leading cause |

| WHO (2019) | Globally, 1 in 10 patients is harmed during hospital care; 2.6 million deaths/year in LMICs from unsafe care |

| IOM (2006) — Medication errors | At least 1.5 million preventable ADEs per year in the US; cost ~$3.5 billion in extra hospital costs |

Distribution of Harm by Category

| Category | Approximate Share of Preventable Harm |

|---|---|

| Adverse drug events (ADEs) | ~20–30% |

| Healthcare-associated infections | ~15–20% |

| Surgical/procedural complications | ~15% |

| Diagnostic errors (missed/delayed/wrong diagnosis) | ~10–15% of inpatient harm; up to 20% of ambulatory visits |

| Falls & pressure injuries | ~5–10% |

| Venous thromboembolism | ~5% |

| Other (device failure, retained foreign body, etc.) | ~10% |

The Economic Burden

The financial cost of preventable harm is staggering. Estimates for the United States alone range from $17 billion to $29 billion annually in direct medical costs from preventable adverse events, with lost productivity and disability driving total societal cost several times higher. A single central-line bloodstream infection adds roughly $46,000 and 10–20 days of length of stay. A pressure injury adds $20,000–$150,000 depending on stage. These costs are shouldered by payers, hospitals (through CMS nonpayment for hospital-acquired conditions), and patients themselves.

03 Just Culture & Systems Thinking

A just culture is an organizational environment in which staff feel safe to report errors and near misses without fear of inappropriate punishment, while still holding individuals accountable for reckless behavior. The concept, popularized by David Marx, replaces both the punitive blame culture and the overly permissive no-blame culture with a structured approach that distinguishes three categories of behavior.

Marx's Three Behaviors

| Behavior | Definition | Response |

|---|---|---|

| Human Error | Inadvertent action: slip, lapse, or mistake. "I shouldn't have done that." | Console the individual; fix the system, process, or training that allowed the error |

| At-Risk Behavior | Choice where risk is not recognized or is mistakenly believed to be justified (e.g., workaround becomes routine) | Coach the individual; remove incentives for the at-risk behavior; create incentives for the safer behavior |

| Reckless Behavior | Conscious disregard of substantial and unjustifiable risk (e.g., skipping time-out deliberately, practicing while impaired) | Disciplinary action (remedial or punitive) regardless of outcome |

When evaluating an incident, ask three substitution-test questions: (1) Would a peer in the same situation have made the same error? If yes → human error. (2) Did the clinician drift into normalized deviance because the workaround was easier or rewarded? If yes → at-risk. (3) Did the clinician knowingly violate a rule that created substantial, unjustifiable risk? If yes → reckless.

Systems Thinking

Systems thinking views any outcome — good or bad — as the product of many interacting components rather than a single cause. In healthcare this includes technology, workflow, communication, staffing, training, environment, and organizational culture. A medication overdose is not "the nurse's fault"; it is the result of a system in which look-alike vials, fatigue, interruption during drug preparation, unclear order formatting, and absent barcode scanning combined to defeat every safeguard. Fixing systems — not individuals — is the lever that prevents the next patient from being harmed.

Reason's Principles of Error Management

| Principle | Implication |

|---|---|

| Human fallibility is inevitable | Design for error tolerance, not error elimination |

| Errors are consequences, not causes | The "root cause" lies upstream in the system |

| Blame is reflex, not remedy | Punishment rarely prevents recurrence |

| Safety is a moving target | Continuous improvement > static compliance |

| Latent conditions can sleep for years | Proactive hazard identification (FMEA) is essential |

04 High-Reliability Organizations & Safety I/II

High-reliability organizations (HROs) operate for long periods in complex, hazardous environments with very few accidents. Examples include aircraft carriers, nuclear power plants, and commercial aviation. Weick and Sutcliffe identified five characteristics of HROs that healthcare systems are trying to emulate.

Five HRO Principles

| Principle | Meaning | Healthcare Example |

|---|---|---|

| Preoccupation with Failure | Treat every near-miss as a warning; actively look for weak signals | Reporting and analyzing a near-miss wrong-site surgery even though the patient was unharmed |

| Reluctance to Simplify | Resist easy explanations; seek the full complexity of the situation | RCA that considers fatigue, EHR design, staffing, and culture — not just "nurse error" |

| Sensitivity to Operations | Leadership maintains situational awareness of the frontline | Daily safety huddles; executive rounds at the bedside (WalkRounds) |

| Commitment to Resilience | Build capacity to recover when failures occur | Rapid-response teams, code teams, simulation-based training |

| Deference to Expertise | Authority migrates to whoever has the most knowledge, regardless of rank | A scrub tech stopping the case because the count is wrong; junior nurse escalating deterioration |

Safety I vs Safety II

Traditional safety thinking (Safety I) defines safety as the absence of accidents and focuses on finding and fixing what goes wrong. Erik Hollnagel's Safety II paradigm instead defines safety as the presence of capacity — the ability of systems to succeed under varying conditions — and studies how things usually go right despite constant variability.

| Dimension | Safety I | Safety II |

|---|---|---|

| Definition of safety | Absence of adverse events | Ability to succeed under expected and unexpected conditions |

| What to study | Accidents and incidents (things that go wrong) | Everyday work (things that go right) |

| View of humans | Hazard — source of variability and error | Resource — source of resilience and adaptation |

| Work-as-imagined vs work-as-done | Enforce compliance with procedures | Understand why workers must adapt procedures |

| Principal method | Root cause analysis, blame tree | Resilience engineering, appreciative inquiry |

Procedures written by managers describe work-as-imagined. The reality on the floor — workarounds, interruptions, missing supplies, adaptations — is work-as-done. The larger the gap, the higher the risk. Closing the gap requires listening to frontline staff, not rewriting more policies.

05 Types of Error: Slips, Lapses, Mistakes, Violations

James Reason's taxonomy distinguishes errors by the cognitive stage at which they occur. Slips and lapses are execution failures during routine (skill-based) action; mistakes are planning failures during rule-based or knowledge-based problem-solving; violations are deliberate deviations from safe procedures.

Reason's Error Classification

| Type | Cognitive Level | Nature | Clinical Example |

|---|---|---|---|

| Slip | Skill-based (automatic) | Execution error — wrong action despite correct intention | Pulling a potassium vial instead of saline from a similar-looking drawer |

| Lapse | Skill-based | Memory failure — omitting a step | Forgetting to flush an IV line; missing a dose on a busy shift |

| Rule-based mistake | Rule-based | Misapplying a good rule or applying a bad rule | Giving full-dose tPA to a stroke patient who has a contraindication |

| Knowledge-based mistake | Knowledge-based | Reasoning error in a novel situation due to incomplete information or bias | Anchoring on sepsis while missing pulmonary embolism |

| Routine violation | Deliberate | Cutting corners that the culture tolerates or rewards | Skipping the time-out on a quick procedure |

| Situational violation | Deliberate | Breaking rules because the situation seems to require it | Overriding the barcode scan when the system is down |

| Exceptional violation | Deliberate | One-off departure in unusual circumstances | Transfusing before crossmatch in exsanguinating trauma |

| Acts of sabotage | Malicious | Deliberate harm | Criminal diversion, poisoning (very rare) |

Diagnostic Error

Diagnostic error — missed, delayed, or wrong diagnosis — is a growing focus of patient-safety science. The National Academies' 2015 report Improving Diagnosis in Health Care concluded that most people will experience at least one diagnostic error in their lifetime. Cognitive biases that drive diagnostic error include:

| Bias | Definition | Example |

|---|---|---|

| Anchoring | Locking onto an early impression and failing to adjust with new data | Labeling every abdominal pain in a frequent-flier patient as gastritis |

| Availability heuristic | Judging likelihood by how easily examples come to mind | Overdiagnosing the last disease you saw in a similar patient |

| Premature closure | Accepting the first plausible diagnosis without considering alternatives | Stopping at "migraine" in a patient with subarachnoid hemorrhage |

| Confirmation bias | Seeking evidence that confirms the working diagnosis | Ignoring a normal troponin in a patient with atypical chest pain |

| Diagnosis momentum | Label persists across handoffs without being re-examined | "Pneumonia" from triage becomes the final diagnosis despite contradictory data |

| Framing effect | Diagnosis influenced by how the case is presented | "Psych patient with chest pain" downgrades the workup |

| Search satisfaction | Stopping after one finding on imaging | Missing a second fracture once the first is identified |

| Base rate neglect | Ignoring disease prevalence | Diagnosing a zebra when horses are far more likely |

06 Swiss Cheese Model & Active/Latent Errors

James Reason's Swiss Cheese Model is the most widely used metaphor in patient safety. Each layer of defense against harm (policies, procedures, training, technology, supervision) is imagined as a slice of Swiss cheese. Every slice has holes — weaknesses or gaps — and when the holes in multiple slices line up, a trajectory of accident opportunity passes through all defenses and reaches the patient.

Accidents require a concatenation of failures. A single error almost never harms a patient because redundant defenses usually catch it. Harm occurs only when multiple barriers fail simultaneously — which is why reviewing an event by asking "whose fault was it?" misses the point. The real question is: "Which barriers failed, and why were the holes there?"

Active vs Latent Errors

| Dimension | Active Error | Latent Error |

|---|---|---|

| Who commits it | Sharp-end worker (nurse, physician, pharmacist) | Blunt-end decision-makers (administrators, designers, regulators) |

| When the effect appears | Immediately, at the sharp end of care | Delayed — may lie dormant for months or years |

| Visibility | Obvious: the nurse administered the wrong drug | Hidden: the hospital stocked similar-looking vials next to each other |

| Example | Wrong-patient specimen draw | Two patients with identical names not flagged by the EHR |

| Remediation | Training, feedback, vigilance | Redesign of policies, technology, staffing, and culture |

Layers of Defense in a Typical Hospital

| Layer | Examples of Defenses | Common Holes |

|---|---|---|

| Technology | CPOE, barcode scanning, smart pumps, clinical decision support | Alert fatigue, override culture, downtime workarounds |

| Procedures | Checklists, protocols, order sets, double-checks | Skipping, copy-forward errors, outdated content |

| People | Training, certification, experience, teamwork | Fatigue, turnover, hierarchy gradients |

| Environment | Lighting, noise, ergonomics, supply stocking | Interruptions, crowding, look-alike packaging |

| Organization | Staffing ratios, culture, leadership, policies | Production pressure, understaffing, silos |

| Regulation | Accreditation, licensing, laws | Tick-box compliance, weak enforcement |

07 Sentinel, Adverse, Near-Miss & Severity Classes

Patient-safety events are classified by outcome (did harm reach the patient?) and by severity (how bad was the harm?). The Joint Commission's definitions are the most widely used in US hospitals.

Event Type Definitions

| Term | Definition | Example |

|---|---|---|

| Adverse event | An injury caused by medical management (not the underlying disease) that results in measurable harm | Hemorrhage from anticoagulant overdose; post-op wound infection |

| Preventable adverse event | An adverse event attributable to an error or failure to follow accepted practice | Fall from a bed without rails in a confused patient |

| Sentinel event | A patient-safety event that reaches a patient and results in death, permanent harm, or severe temporary harm requiring intervention to sustain life | Wrong-site surgery; suicide during inpatient stay |

| Never event | A subset of sentinel events that should never occur: serious, largely preventable, and clearly identifiable (NQF list of 29) | Retained surgical item; ABO-incompatible transfusion |

| Near miss (close call) | An event that did not reach the patient because of chance or timely intervention | Wrong drug detected at bedside scan before administration |

| No-harm event | An event that reached the patient but did not cause detectable harm | Wrong drug given but had no effect |

| Hazardous condition | A circumstance that increases probability of an adverse event, even if no event has occurred | Broken bed alarm; unlabeled syringe on the anesthesia cart |

NCC MERP Harm Severity Index (Medication Errors)

| Category | Description |

|---|---|

| A | Circumstances or events that have the capacity to cause error (hazardous condition) |

| B | An error occurred but the medication did not reach the patient |

| C | Error reached the patient but did not cause harm |

| D | Error reached the patient and required monitoring to confirm no harm and/or intervention to preclude harm |

| E | Error contributed to or resulted in temporary harm requiring intervention |

| F | Error contributed to or resulted in temporary harm requiring initial or prolonged hospitalization |

| G | Error resulted in permanent harm |

| H | Error required intervention to sustain life |

| I | Error resulted in the patient's death |

Sentinel Event Requirements (Joint Commission)

When a sentinel event occurs, Joint Commission–accredited organizations are expected to: (1) stabilize the patient and disclose the event; (2) notify organizational leadership; (3) conduct a comprehensive systematic analysis (typically RCA) within 45 days; (4) develop and implement a corrective action plan with measurable outcomes; and (5) monitor the effectiveness of the actions. Voluntary reporting to the Joint Commission is encouraged but not mandatory.

08 Root Cause Analysis & the Sentinel Event Protocol

Root cause analysis (RCA) is a structured, retrospective investigation of an adverse event or near miss with the goal of identifying the underlying systems factors (not individuals) that contributed, and generating durable corrective actions. It is the cornerstone response to sentinel events.

Core Principles of RCA

| Principle | Implication |

|---|---|

| Focus on systems, not individuals | Ask "why," not "who" |

| Dig until you hit systemic, actionable causes | Stop when further "why" questions lead to management-level decisions |

| Use multidisciplinary teams | Include frontline staff, leadership, and patient/family perspectives |

| Be blameless and transparent | Protect reporters; share findings widely |

| Actions must be strong and measurable | Avoid "retrain and remind" as the only intervention — this is the weakest action |

RCA Process (Typical 8 Steps)

| Step | Activity |

|---|---|

| 1. Trigger | Event meets criteria (sentinel, near-miss, trend) |

| 2. Assemble team | Multidisciplinary, including frontline workers; exclude those with disciplinary authority over participants |

| 3. Define the event | Write a concise, factual problem statement |

| 4. Construct timeline | Chronological reconstruction using chart, interviews, devices, and logs |

| 5. Identify contributing factors | Use 5 Whys, fishbone, or barrier analysis |

| 6. Identify root causes | Systemic, latent factors that, if corrected, would prevent recurrence |

| 7. Develop action plan | Strong actions (forcing functions, architectural changes) preferred over weak actions (education) |

| 8. Measure & follow up | Pre-defined metrics; report progress to leadership |

Hierarchy of Corrective Actions

| Strength | Type | Examples |

|---|---|---|

| Strong | Eliminates or prevents the hazard | Architectural changes, forcing functions, tubing misconnection prevention (Luer locks), new technology with hard stops, removing concentrated KCl from floor stock |

| Intermediate | Controls or mitigates the hazard | Checklists, cognitive aids, read-back, double checks, standardized order sets, increased staffing |

| Weak | Relies on vigilance or memory | New policy, education, warning labels, disciplinary action, "be more careful" |

RCA2 (Root Cause Analysis and Action)

The National Patient Safety Foundation's 2015 report RCA2: Improving Root Cause Analyses and Actions to Prevent Harm added the "squared" to emphasize that the purpose of RCA is action, not just analysis. Key recommendations include: leadership participation, incorporation of strong corrective actions, 45-day completion goal, risk-based prioritization of events, and measurement of action effectiveness.

09 Fishbone, 5 Whys & Fault Tree Analysis

Several structured tools help an RCA team move from "what happened" to "why it happened" and expose the underlying contributing factors.

Fishbone (Ishikawa) Diagram

The fishbone diagram is a cause-and-effect tool in which the problem forms the "head" of a fish and the contributing causes are organized along "bones" representing categories. Common healthcare categories include the 6 Ms: Man (people), Machine (equipment), Method (procedures), Materials (supplies, drugs), Measurement (metrics, monitoring), and Milieu (environment, culture). Other variants use People, Process, Policies, Equipment, Environment, and Communication.

| Category | Contributing Factors to Explore |

|---|---|

| People (Man) | Training, fatigue, experience, staffing ratios, supervision |

| Method / Process | Protocols, workflow design, handoffs, order sets |

| Machine / Equipment | Device design, maintenance, interoperability, alarms |

| Materials | Drug packaging, supply availability, labeling |

| Measurement | Monitoring, metrics, feedback, surveillance |

| Environment (Milieu) | Lighting, noise, interruptions, physical layout, culture |

5 Whys

The 5 Whys technique asks "why" repeatedly until the root cause is exposed. It is most effective for single-cause events and is often embedded within a larger fishbone or RCA process.

Event: Patient received double dose of insulin.

Why? Two nurses both administered the 8 AM dose.

Why? There was no clear documentation of who had given it.

Why? The EHR required the nurse to leave the bedside to document.

Why? Workstations on wheels were unavailable on the unit.

Why? Capital budget for mobile workstations was cut two years ago.

Root cause: Inadequate point-of-care documentation infrastructure — a latent, systems-level problem.

Fault Tree Analysis

A fault tree starts with the undesired top event and works backward, using Boolean AND/OR gates to map every combination of contributing failures. It is quantitative when probabilities are attached to basic events, and it is especially useful for technology or device failures where multiple redundant defenses exist.

Barrier Analysis

Barrier analysis identifies the defenses (barriers) that should have prevented harm and asks which ones failed, which ones were missing, and why. It complements fishbone diagrams by forcing the team to think about the defensive structure rather than just causes. Barriers are classified as physical (locked cabinets, Luer locks), administrative (policies, checklists), human (double checks, supervision), and technological (alarms, CDS, hard stops).

10 FMEA — Failure Mode & Effects Analysis

Failure Mode and Effects Analysis (FMEA) is a prospective (proactive) risk-assessment tool. While RCA is retrospective ("what went wrong?"), FMEA asks "what could go wrong?" It is used before implementing a new process, drug, technology, or facility to identify failure points and mitigate them in advance. The Joint Commission requires each accredited hospital to conduct at least one proactive risk assessment (typically FMEA) every 18 months.

FMEA Process

| Step | Activity |

|---|---|

| 1. Select a high-risk process | New chemotherapy protocol, EHR go-live, surgical procedure |

| 2. Assemble a multidisciplinary team | Include frontline users of the process |

| 3. Diagram the process | Flowchart every step and sub-step |

| 4. List failure modes | For each step, identify all ways it could fail |

| 5. Score each failure mode | Severity (S) × Occurrence (O) × Detectability (D) = Risk Priority Number (RPN) |

| 6. Prioritize | Address highest RPN first (conventionally >100) |

| 7. Redesign | Implement safeguards; recalculate RPN to verify improvement |

| 8. Monitor | Track the process post-implementation to validate assumptions |

Calculating the Risk Priority Number (RPN)

| Dimension | Scale (1–10) | Question |

|---|---|---|

| Severity (S) | 1 (no harm) to 10 (death) | How bad would the harm be if this failure occurred? |

| Occurrence (O) | 1 (very rare) to 10 (almost certain) | How often would this failure happen? |

| Detectability (D) | 1 (always caught) to 10 (never caught) | How likely is the failure to be detected before reaching the patient? |

| RPN = S × O × D (range 1–1000). Typically prioritize failure modes with RPN > 100 or with any severity score of 9–10. | ||

Healthcare FMEA (HFMEA) — VA Variant

The VA's National Center for Patient Safety developed HFMEA to simplify FMEA for healthcare. It replaces the RPN with a Hazard Score (Severity × Probability on a 4×4 matrix) and adds a "decision tree" asking whether a hazard is already controlled, is a single-point weakness, or is so obvious it needs no analysis. HFMEA is widely used in VA and DoD facilities.

11 PDSA, Lean, Six Sigma & DMAIC

Quality improvement (QI) draws from industrial engineering methods adapted to healthcare. The three dominant methodologies are the Model for Improvement (PDSA), Lean (from Toyota), and Six Sigma (from Motorola). They are complementary: PDSA is the engine of small tests of change, Lean focuses on waste and flow, and Six Sigma focuses on reducing variation.

The Model for Improvement & PDSA

Developed by Associates in Process Improvement and popularized by IHI, the Model for Improvement poses three questions before launching any change:

| Question | Purpose |

|---|---|

| 1. What are we trying to accomplish? | Define a specific, measurable, time-bound aim |

| 2. How will we know that a change is an improvement? | Identify measures (outcome, process, balancing) |

| 3. What change can we make that will result in improvement? | Generate change ideas grounded in theory |

Changes are then tested using the PDSA cycle:

| Phase | Activity |

|---|---|

| Plan | Define the test, prediction, data collection, and responsibilities |

| Do | Run the test on a small scale; collect data and observations |

| Study | Analyze the data; compare actual to prediction; summarize what was learned |

| Act | Adopt, adapt, or abandon the change; plan the next PDSA cycle |

The power of PDSA lies in iterative, small-scale testing. The first cycle might involve one nurse, one patient, one shift — not a unit-wide rollout. This keeps risk low, generates fast learning, and surfaces unintended consequences before scaling.

Three Types of Measures

| Measure | Question | Example (CAUTI bundle) |

|---|---|---|

| Outcome | Is the ultimate goal being met? | CAUTI rate per 1,000 catheter-days |

| Process | Are we doing the right things? | % of Foleys with documented daily necessity review |

| Balancing | Is the change causing unintended problems? | % of patients requiring reinsertion after early removal |

Lean

Lean thinking, derived from the Toyota Production System, defines value from the patient's perspective and eliminates anything that does not contribute to that value (waste). The eight wastes in healthcare are memorized with the mnemonic DOWNTIME: Defects, Overproduction, Waiting, Not-utilizing-talent, Transportation, Inventory, Motion, Extra-processing.

| Lean Tool | Purpose |

|---|---|

| Value Stream Map | Map every step from patient arrival to outcome; classify as value-added or waste |

| 5S | Workplace organization: Sort, Set in order, Shine, Standardize, Sustain |

| Kanban | Visual inventory signals to prevent stock-outs and overstock |

| Kaizen event | Rapid improvement workshop (typically 1 week) to redesign a process |

| A3 thinking | Structured one-page problem-solving format |

| Gemba walk | Leadership observation of work "where it happens" |

Six Sigma & DMAIC

Six Sigma's goal is to reduce process variation to fewer than 3.4 defects per million opportunities. It uses the DMAIC cycle for improving existing processes and DMADV for designing new ones.

| Phase | Activity |

|---|---|

| Define | Problem, customer, project scope, aim |

| Measure | Baseline data; validate the measurement system |

| Analyze | Identify root causes of variation |

| Improve | Design and pilot interventions |

| Control | Sustain the gain with control plans, SPC, and training |

12 Run Charts, Control Charts & A3 Thinking

Quality improvement depends on the ability to distinguish signal from noise in data. Two charts — the run chart and the control chart — are the workhorses of process monitoring and are built on statistical process control (SPC) theory developed by Walter Shewhart and W. Edwards Deming.

Run Chart

A run chart plots data over time with a median line. It uses four simple probability-based rules to distinguish random variation from non-random change (a "signal"):

| Rule | Signal |

|---|---|

| Shift | 6 or more consecutive points on one side of the median |

| Trend | 5 or more consecutive points all going up or all going down |

| Runs | Too few or too many runs (crossings of the median) for the number of data points |

| Astronomical point | A data point that is obviously different from all others |

Control Chart (Shewhart Chart)

A control chart adds statistically derived upper and lower control limits (usually ±3 standard deviations from the mean) and distinguishes common-cause variation (inherent process noise) from special-cause variation (a signal that the process has changed). Different chart types are used for different data:

| Chart | Use | Example |

|---|---|---|

| p-chart | Proportion of defectives, variable sample size | % of surgical patients getting correct VTE prophylaxis |

| np-chart | Number of defectives, constant sample size | Number of falls per week on a unit |

| c-chart | Count of defects per unit of measure | Needlestick injuries per month |

| u-chart | Defects per unit, variable sample size | CLABSIs per 1,000 line-days |

| X-bar & R | Continuous variables, small subgroups | Door-to-balloon time |

A3 Thinking

An A3 report (named for the European paper size) is a single-page structured problem-solving document used widely in Lean organizations. A typical A3 contains: background, current condition, goal, analysis of root causes, proposed countermeasures, implementation plan, and follow-up. Its value is less the paper and more the rigorous thinking and dialogue it forces between the author and the coach ("sensei").

13 Medication Error Pathway & ADE vs ADR

Medication errors are the most common source of preventable harm in hospitals. Preventable adverse drug events (ADEs) affect roughly 1 in 20 hospitalized patients and contribute to 7,000–9,000 US deaths per year. Errors can occur at any of five stages of the medication-use process.

Five Stages of the Medication-Use Process

| Stage | Typical Errors | Safeguards |

|---|---|---|

| Prescribing | Wrong drug, dose, route, frequency; unknown allergy; drug interaction; illegible handwriting | CPOE, clinical decision support, allergy alerts, pharmacist review, standardized order sets |

| Transcribing | Misreading handwriting; transcription typos; dangerous abbreviations | CPOE eliminates most transcription; avoid "do not use" abbreviations (U, IU, QD, QOD, MS, MSO4, MgSO4, trailing zero) |

| Dispensing | Wrong drug or dose selected; look-alike/sound-alike errors; mislabeling | Tall-Man lettering, automated dispensing cabinets, barcode verification, unit-dose packaging, pharmacist double-check |

| Administering | Wrong patient, drug, dose, route, or time; pump misprogramming | Five rights; barcode medication administration (BCMA); smart pumps with drug libraries; independent double checks for high-alert drugs |

| Monitoring | Failure to check labs (INR, troponin, drug levels); missed adverse effect | Automated lab review; pharmacy surveillance alerts; standing orders for monitoring |

The Traditional "Five Rights" (and Modern Additions)

The five rights of medication administration are right patient, right drug, right dose, right route, right time. Modern authors have added the right documentation, right reason, right response, and right to refuse. The five rights are system outcomes produced by safe design; they are not a checklist that a busy nurse can reliably perform from memory.

ADE vs ADR vs Medication Error

| Term | Definition | Preventable? | Example |

|---|---|---|---|

| Adverse drug event (ADE) | Any injury resulting from use of a drug | Some preventable, some not | Bleed from appropriately dosed warfarin; overdose from prescribing error |

| Adverse drug reaction (ADR) | Unintended, noxious response to a drug at normal doses | Usually not preventable | Anaphylaxis to first exposure to penicillin |

| Medication error | Any preventable event that may cause inappropriate medication use or patient harm | By definition yes | Wrong-patient administration; 10× dose error |

| Side effect | Any expected pharmacologic effect other than the intended one | Not always harmful | Dry mouth from an anticholinergic |

| Potential ADE | A medication error that reached the patient but did not cause harm (or was caught) | Yes | Near-miss wrong dose detected before administration |

14 High-Alert Medications & the ISMP List

High-alert medications are drugs that bear a heightened risk of causing significant patient harm when used in error. The Institute for Safe Medication Practices (ISMP) maintains the authoritative list and recommends enhanced safeguards for each.

Major High-Alert Categories (ISMP Acute Care List)

| Category | Examples | Classic Harm |

|---|---|---|

| Anticoagulants | Heparin, LMWH, warfarin, DOACs | Hemorrhage, HIT |

| Insulin (all formulations) | Regular, NPH, glargine, U-500 | Hypoglycemia, coma, death |

| Opioids (IV, epidural, transdermal) | Morphine, hydromorphone, fentanyl | Respiratory depression, death |

| Concentrated electrolytes | KCl, NaCl >0.9%, MgSO4, phosphate | Arrhythmia, cardiac arrest |

| Chemotherapy (parenteral & oral) | Vincristine, methotrexate, cisplatin | Fatal overdoses; intrathecal vincristine |

| Moderate/deep sedation agents | Propofol, midazolam, ketamine | Apnea, hypotension |

| Neuromuscular blockers | Rocuronium, vecuronium, succinylcholine | Apnea; "awake paralysis" if given without sedation |

| Hypertonic glucose (≥20%) | D50W, TPN | Hyperglycemia, line necrosis |

| Thrombolytics | Alteplase, tenecteplase | ICH, systemic bleeding |

| Adrenergic agonists (IV) | Epinephrine, norepinephrine, phenylephrine | Extravasation, arrhythmia |

| PCA opioids | Morphine, hydromorphone | PCA by proxy; programming errors |

Concentrated Potassium Chloride

Concentrated KCl (2 mEq/mL) was one of the original catalysts of modern medication safety. Multiple deaths from accidental IV push of concentrated KCl led to the Joint Commission's requirement that concentrated electrolytes be removed from patient care units and dispensed only by pharmacy in pre-mixed solutions. This is a forcing function — you cannot make the error because the opportunity no longer exists on the floor.

Intrathecal vincristine is uniformly fatal. To prevent accidental intrathecal administration during concurrent lumbar puncture for IT chemotherapy, ISMP and WHO recommend that vincristine be dispensed only in mini-bags of 25–50 mL (never in a syringe), making intrathecal administration physically impossible. This is another forcing function.

Enhanced Safeguards for High-Alert Drugs

| Safeguard | Description |

|---|---|

| Standardization | Single standard concentration per drug; remove non-standard vials |

| Independent double check | Two qualified clinicians independently verify drug, dose, pump settings, patient |

| Smart pump drug libraries | Hard upper dose limits that cannot be overridden |

| Auxiliary labels & color coding | "High alert — verify dose" warnings |

| Restricted access | Chemotherapy and neuromuscular blockers stored in locked, segregated areas |

| Protocolized ordering | Weight-based order sets; mandatory indication fields |

15 LASA, Reconciliation & Technology Safeguards

Look-Alike / Sound-Alike (LASA) Drugs

Look-alike, sound-alike (LASA) pairs are drugs whose names or packaging are easily confused. ISMP maintains a published list of confused-drug name pairs. Common LASA errors include:

| Drug Pair | Harm |

|---|---|

| Hydralazine / hydroxyzine | Untreated hypertension or oversedation |

| Celebrex / Celexa / Cerebyx | Wrong class entirely (NSAID / SSRI / anticonvulsant) |

| Heparin vials of different concentrations | 1000-fold overdose (Quaid twin case, Methodist 2007) |

| Vinblastine / vincristine | Different dose ranges; fatal overdoses reported |

| Epinephrine 1:1,000 vs 1:10,000 | 10-fold dosing error |

| Humalog / Humulin | Rapid vs intermediate-acting insulin mix-up |

| Dopamine / dobutamine | Different hemodynamic effects in shock |

| Fentanyl / sufentanil | 10-fold potency difference |

Tall-Man lettering (e.g., hydrOXYzine vs hydrALAzine) visually distinguishes the differing portions of confusable names and has been adopted by the FDA and ISMP as a standard mitigation.

Medication Reconciliation

Medication reconciliation is the formal process of creating the most accurate list possible of all medications a patient is taking and comparing that list against new orders at every transition of care (admission, transfer, discharge). Discrepancies — omissions, duplications, wrong doses — are reconciled before they harm the patient. The Joint Commission made medication reconciliation a National Patient Safety Goal (NPSG.03.06.01).

| Transition | Key Risk |

|---|---|

| Admission | Home medications omitted or inaccurately recorded |

| Transfer (ICU ↔ floor) | Drip medications, pain regimens, and holds lost |

| Discharge | New prescriptions without stopping old; dose changes not communicated to PCP or patient |

Technology Safeguards

| Technology | Function | Evidence |

|---|---|---|

| CPOE (Computerized Provider Order Entry) | Structured electronic ordering; eliminates handwriting and transcription errors | ~50% reduction in serious medication errors when paired with CDS |

| CDS (Clinical Decision Support) | Allergy, interaction, dose-range, and duplicate alerts at the point of order | Effective only when alerts are specific; alert fatigue from over-firing reduces benefit |

| BCMA (Barcode Medication Administration) | Barcode scan at bedside verifies right patient, right drug | ~50% reduction in wrong-drug and wrong-dose administration errors |

| Smart pumps | Drug libraries with soft/hard dose limits | Prevents infusion overdoses when libraries are robust and overrides are monitored |

| Automated dispensing cabinets (Pyxis, Omnicell) | Secure, tracked storage with profile linking to orders | Reduce diversion and unauthorized access; limit overrides for high-alert drugs |

| Pharmacist order verification | Pharmacist reviews each order before administration | Catches 5–10% of orders requiring clarification or correction |

16 HAC List, Never Events & NQF Framework

Hospital-acquired conditions (HACs) are complications that develop during a hospital stay. CMS identifies a set of HACs for which it will not pay the additional cost when acquired after admission, creating a financial incentive for prevention. The National Quality Forum maintains the list of Serious Reportable Events ("never events") — events so egregious they should never occur.

CMS Hospital-Acquired Conditions (Selected)

| HAC | Prevention Lever |

|---|---|

| Foreign object retained after surgery | Counts, imaging, radiofrequency tags |

| Air embolism | Proper central-line technique; Trendelenburg during insertion/removal |

| Blood incompatibility (ABO mismatch) | Two-person verification; BCMA for blood products |

| Stage III/IV pressure injury | Braden scale assessment; turning; support surfaces |

| Falls and trauma (fracture, dislocation, intracranial injury) | Fall risk assessment; bed alarms; non-slip footwear |

| Catheter-associated urinary tract infection (CAUTI) | Daily necessity review; aseptic insertion; closed drainage |

| Vascular catheter-associated infection (CLABSI) | Central-line bundle; chlorhexidine; maximal barrier |

| Surgical site infection after CABG, bariatric, orthopedic procedures | Pre-op antibiotics; normothermia; glucose control |

| Deep vein thrombosis / PE after THA or TKA | Mechanical + pharmacologic VTE prophylaxis |

| Poor glycemic control (DKA, hyperosmolar coma, hypoglycemic coma) | Protocolized insulin; hypoglycemia bundles |

| Iatrogenic pneumothorax with venous catheterization | Ultrasound-guided insertion |

NQF Serious Reportable Events ("Never Events") — Seven Categories

| Category | Examples |

|---|---|

| Surgical / Invasive Procedure | Wrong site, wrong patient, wrong procedure; retained foreign object; death of ASA Class I patient |

| Product or Device | Death or injury from contaminated drug/device; malfunctioning device; intravascular air embolism |

| Patient Protection | Discharge of infant to wrong person; elopement resulting in harm; inpatient suicide |

| Care Management | Medication error death; wrong blood product; hypoglycemia death; stage III/IV pressure injury; maternal or neonatal death in low-risk delivery |

| Environmental | Electric shock; burn; fall with injury; use of restraints resulting in death |

| Radiologic | Metallic object introduced into MRI area |

| Criminal | Abduction; sexual abuse; physical assault by staff or on staff |

17 HAI Prevention: CLABSI, CAUTI, SSI, VAP, CDI

Healthcare-associated infections (HAIs) affect roughly 1 in 25 hospitalized patients at any given time in the US, cause tens of thousands of deaths annually, and add billions of dollars in costs. Evidence-based bundles — small groups of interventions performed together and consistently — have driven dramatic reductions in the most common HAIs.

Central-Line-Associated Bloodstream Infection (CLABSI)

The IHI/Pronovost CLABSI bundle achieved near-zero infection rates in the Michigan Keystone ICU project:

| Element | Description |

|---|---|

| Hand hygiene | Before and after insertion and every line access |

| Maximal barrier precautions | Mask, cap, sterile gown, gloves, full-body drape on insertion |

| Chlorhexidine skin prep | >0.5% chlorhexidine with alcohol; allow to dry |

| Optimal site selection | Subclavian preferred over femoral; avoid femoral when possible |

| Daily necessity review | Remove lines as soon as no longer needed |

Catheter-Associated Urinary Tract Infection (CAUTI)

| Element | Description |

|---|---|

| Avoid unnecessary catheters | Use only for specific indications (output monitoring in critical illness, obstruction, stage 3/4 sacral ulcer, etc.) |

| Aseptic insertion | Sterile technique, sterile equipment |

| Closed drainage system | Never disconnect; keep bag below bladder |

| Daily necessity review | Remove as soon as possible; use nurse-driven removal protocols |

| Alternatives | Condom catheters in males; intermittent straight cath; bladder scanning |

Surgical Site Infection (SSI)

| Element | Description |

|---|---|

| Prophylactic antibiotics | Administer within 60 minutes before incision (120 min for vancomycin/fluoroquinolones); redose for long cases; stop within 24 hours |

| Appropriate antibiotic selection | Guided by procedure and local resistance patterns |

| Normothermia | Maintain core temp ≥ 36°C (forced-air warming) |

| Glucose control | Blood glucose < 180 mg/dL post-op (cardiac surgery and beyond) |

| Hair removal | Clippers only (not razors); at time of surgery |

| Skin antisepsis | Chlorhexidine-alcohol (preferred) or povidone-iodine |

| Supplemental oxygen | 80% FiO2 intraop in some colorectal surgery protocols |

Ventilator-Associated Pneumonia (VAP) / Ventilator-Associated Events (VAE)

| Element | Description |

|---|---|

| Head of bed ≥ 30–45° | Reduces aspiration |

| Daily sedation vacation | Permits spontaneous awakening trials |

| Daily SBT (spontaneous breathing trial) | Accelerates extubation |

| Oral care with chlorhexidine | Reduces oropharyngeal colonization |

| DVT prophylaxis | Part of combined bundle |

| Stress ulcer prophylaxis | PPI or H2 blocker |

| Subglottic suctioning ETTs | Reduces microaspiration |

Clostridioides difficile Infection (CDI)

| Element | Description |

|---|---|

| Antibiotic stewardship | Avoid unnecessary broad-spectrum antibiotics — the single most effective prevention |

| Contact precautions | Gown and gloves for known or suspected CDI |

| Soap-and-water hand hygiene | Alcohol-based rub does NOT kill spores |

| Dedicated equipment | Thermometers, stethoscopes stay in the room |

| Environmental cleaning | Sporicidal agents (bleach 1:10) |

| Private room | Or cohort with other CDI patients |

18 Falls, Pressure Injuries & VTE Prophylaxis

Inpatient Falls

Falls are the most common inpatient adverse event and a frequent cause of preventable harm, especially in older adults. Prevention begins with identifying high-risk patients using validated tools such as the Morse Fall Scale or Hendrich II.

| Intervention | Description |

|---|---|

| Universal precautions | Non-slip footwear, call light within reach, adequate lighting, uncluttered walkways |

| Risk-targeted interventions | Yellow armband/socks, bed/chair alarms, hourly rounding, toileting schedules |

| Environmental | Low beds, non-slip floors, grab bars, bedside commode |

| Medication review | Limit benzodiazepines, hypnotics, anticholinergics, orthostatic hypotension triggers |

| Delirium prevention | HELP protocol, sleep hygiene, early mobilization |

| Post-fall huddle | Immediate team review to determine cause and corrective action |

Pressure Injuries

The NPIAP (National Pressure Injury Advisory Panel) replaced the older "pressure ulcer" terminology with "pressure injury" in 2016 and defines six stages:

| Stage | Description |

|---|---|

| Stage 1 | Intact skin with non-blanchable erythema |

| Stage 2 | Partial-thickness skin loss with exposed dermis (shallow pink/red wound bed or serum-filled blister) |

| Stage 3 | Full-thickness skin loss; subcutaneous fat may be visible; no bone/tendon/muscle exposure |

| Stage 4 | Full-thickness skin and tissue loss with exposed or palpable fascia, muscle, tendon, ligament, cartilage, or bone |

| Unstageable | Full-thickness loss obscured by slough or eschar |

| Deep tissue injury (DTI) | Persistent non-blanchable deep red, maroon, or purple discoloration; intact or non-intact skin |

Hospital-Acquired Pressure Injury (HAPI) Prevention Bundle

| Element | Description |

|---|---|

| Risk assessment | Braden scale on admission and daily |

| Skin assessment | Head-to-toe on admission, every shift, at transfer |

| Repositioning | Every 2 hours; 30° side-lying; avoid shearing |

| Support surfaces | Pressure-redistributing mattresses and cushions for high-risk patients |

| Moisture management | Barrier creams; incontinence care |

| Nutrition | Protein, calorie, and hydration support; dietitian consult |

| Device-related prevention | Rotate O2 tubing, BiPAP masks; pad ETTs; check under cervical collars |

Venous Thromboembolism (VTE) Prophylaxis

VTE (DVT and PE) is one of the most common preventable causes of in-hospital death. Risk-stratified prophylaxis using the Caprini score (surgical) or Padua score (medical) guides interventions.

| Risk Level | Typical Prophylaxis |

|---|---|

| Low-risk medical / ambulatory | Early ambulation, mechanical (IPC/graduated stockings) |

| Moderate-risk medical | LMWH (enoxaparin 40 mg SC daily) or UFH 5000 U SC BID/TID |

| High-risk surgical (orthopedic, cancer) | LMWH or DOAC; extended duration (up to 35 days after THA) |

| Contraindication to pharmacologic | Mechanical prophylaxis (IPC); reassess daily |

19 WHO Checklist, Time-Outs & SCIP Measures

The operating room has historically been one of the highest-risk environments in healthcare. Standardized checklists, time-outs, and bundled measures have transformed surgical safety and reduced morbidity and mortality.

WHO Surgical Safety Checklist

The WHO Surgical Safety Checklist, introduced by Atul Gawande and colleagues in 2008, has been shown in an 8-country study to reduce major complications by one-third and mortality by nearly half. It has three phases:

| Phase | When | Key Items |

|---|---|---|

| Sign In | Before induction of anesthesia | Patient identity, site, procedure, consent; site marked; anesthesia machine and medication check; pulse oximeter; known allergies; difficult airway/aspiration risk; blood loss risk and IV access |

| Time Out | Before skin incision | All team members introduce themselves by name and role; confirm patient, site, procedure; anticipated critical events (surgeon, anesthesia, nursing); antibiotic prophylaxis given within 60 min; imaging displayed |

| Sign Out | Before patient leaves OR | Name of procedure recorded; instrument/sponge/needle counts correct; specimen labeling; any equipment problems; key concerns for recovery and management |

Universal Protocol (Joint Commission)

The Joint Commission's Universal Protocol for preventing wrong-site, wrong-procedure, and wrong-person surgery has three components:

| Component | Description |

|---|---|

| Pre-procedure verification | Confirm patient identity, procedure, site using two identifiers and all available documents (consent, H&P, imaging, orders) |

| Site marking | Unambiguous mark (surgeon's initials) at the exact incision site by the person performing the procedure, with the patient awake and involved when possible |

| Time-out | Final verification immediately before the procedure; active participation of the entire team; any member empowered to stop the procedure |

SCIP Measures (Surgical Care Improvement Project)

| Measure | Target |

|---|---|

| SCIP Inf-1 | Prophylactic antibiotic within 1 hour before incision (2 hours for vancomycin / fluoroquinolones) |

| SCIP Inf-2 | Prophylactic antibiotic selection appropriate for procedure |

| SCIP Inf-3 | Prophylactic antibiotic discontinued within 24 hours after surgery end (48 hours for cardiac) |

| SCIP Inf-4 | Cardiac surgery patients with controlled 6 AM post-op blood glucose |

| SCIP Inf-6 | Appropriate hair removal (no razors) |

| SCIP Inf-9 | Urinary catheter removed by post-op day 1 or 2 |

| SCIP Inf-10 | Perioperative normothermia (core temperature ≥ 36°C) |

| SCIP VTE-1/2 | VTE prophylaxis ordered and given within 24 hours peri-operatively |

| SCIP Card-2 | Beta-blocker continuation in patients on chronic therapy |

20 Wrong-Site Surgery & Retained Items

Wrong-Site, Wrong-Patient, Wrong-Procedure Events

Wrong-site surgery is rare (approximately 1 per 112,000 procedures) but devastating when it occurs. Root causes typically include: incomplete pre-op verification, unclear or absent site marking, scheduling errors, production pressure, unfamiliar team, and skipping the time-out.

| Category | Failure Example | Safeguard |

|---|---|---|

| Wrong site | Left knee arthroscopy on right knee | Site marked by surgeon with initials; time-out |

| Wrong side | Left chest tube on the right | Bedside imaging review; marking with patient awake |

| Wrong level | Spinal surgery at wrong vertebral level | Intraoperative imaging and counting from known landmarks |

| Wrong procedure | Bilateral vs unilateral oophorectomy confusion | Pre-procedure verification of consent and indications |

| Wrong patient | Similar names, mixed charts | Two-identifier verification at every step |

Retained Surgical Items (RSI)

Retained surgical items include sponges, needles, instruments, and device fragments. Retained sponges are the most common. Standard prevention relies on manual counts before, during, and after the procedure, with discrepancies requiring imaging (radiograph or C-arm) before closure.

| Prevention Strategy | Description |

|---|---|

| Standardized counts | Before procedure, before closure of cavity, before skin closure, at hand-off of scrub role |

| Radiopaque sponges | All surgical sponges contain a radiopaque marker |

| Mandatory imaging for discrepancies | Incorrect count triggers intraoperative X-ray or C-arm prior to closure |

| Technology adjuncts | RFID-tagged sponges and wand detection; barcode sponge counting |

| "No-interruption" counts | Counts performed in a quiet, distraction-free environment |

| High-risk flags | Emergency procedures, BMI > 30, unexpected intraoperative changes, multiple cavities, long procedures |

21 Anesthesia, Fire & Specimen Safety

Anesthesia Safety

Anesthesiology is the safety success story of modern medicine. Mortality directly attributable to anesthesia dropped from approximately 1 in 10,000 in the 1970s to fewer than 1 in 200,000 today, through a combination of engineered forcing functions and standardized monitoring.

| Intervention | Effect |

|---|---|

| Pulse oximetry (standard since 1986) | Early detection of hypoxemia |

| Capnography | Confirms endotracheal placement; detects disconnection and hypoventilation |

| Standardized gas connectors (Pin Index, DISS) | Prevents wrong-gas administration — a classic forcing function |

| Vaporizer interlocks | Prevents simultaneous activation of two inhaled anesthetics |

| Malignant hyperthermia (MH) protocols | Dantrolene stocked; MH cart; hotline (MHAUS) |

| Difficult airway algorithms | ASA algorithm guides rescue; video laryngoscopy; surgical airway kits |

| Closed-claims analysis & ASAPCCP | Systematic learning from litigated cases |

Operating Room Fire Safety

OR fires require three elements (the fire triangle): ignition source (electrosurgery, laser), fuel (drapes, alcohol prep, hair), and oxidizer (supplemental O2 or N2O). Head and neck surgery is the highest-risk setting.

| Control | Description |

|---|---|

| Allow alcohol prep to dry (≥ 3 minutes; ≥ 1 hour for hair) | Prevents flammable vapor ignition |

| Minimize FiO2 | Use lowest clinically acceptable oxygen concentration during ESU use near the airway |

| Moisten sponges, gauze, drapes near airway | Reduces fuel load |

| Fire-risk assessment before each case | Team awareness of the three elements |

| Prepared fire response (RACE / PASS) | Rescue, Alarm, Confine, Extinguish; Pull, Aim, Squeeze, Sweep |

Surgical Smoke Evacuation

Surgical smoke from electrocautery and lasers contains carcinogens, viral DNA, and fine particulates. OSHA, AORN, and state laws increasingly require smoke evacuation systems at the site of generation.

Specimen Safety

| Risk | Mitigation |

|---|---|

| Mislabeled specimen (wrong patient) | Labeling at the point of collection; two-identifier confirmation; read-back |

| Lost specimen in transit | Chain-of-custody tracking; dedicated specimen couriers; barcode scanning |

| Wrong fixative (formalin vs saline vs frozen) | Standardized specimen kits; pre-operative communication with pathology |

| Discarded specimen | No specimen leaves the OR without verified labeling and documented handoff |

22 Handoff Failures, SBAR & I-PASS

Communication failures contribute to the majority of sentinel events analyzed by the Joint Commission. Transitions of care — between shifts, services, units, and facilities — are particularly vulnerable: information is lost, responsibility is diffused, and the mental model of the patient is fragmented. Structured handoff tools address these failures.

SBAR

SBAR is a standardized communication template developed by the US Navy and adapted to healthcare by Kaiser Permanente. It provides a shared mental model for urgent clinical communication and nurse-to-physician calls.

| Letter | Meaning | Content |

|---|---|---|

| Situation | What is happening now? | "I'm calling about Mr. Jones in 402; his blood pressure is 80/40." |

| Background | Relevant context | "72-year-old post-op day 1 from colectomy, on hydromorphone PCA." |

| Assessment | What you think is going on | "I'm worried he may be hypovolemic or septic." |

| Recommendation | What you want to happen | "Can you come see him now, and should I give a 500 mL bolus?" |

I-PASS

I-PASS is a structured handoff mnemonic developed for pediatric residents and associated with a 30% reduction in medical errors and a 23% reduction in preventable adverse events in a nine-center study.

| Letter | Element | Content |

|---|---|---|

| I | Illness severity | Stable, "watcher," or unstable |

| P | Patient summary | One-liner with events leading to admission, hospital course, plan |

| A | Action list | To-do items with timelines and ownership |

| S | Situation awareness & contingency planning | "If X happens, do Y" |

| S | Synthesis by receiver | Read-back and opportunity for questions |

Other Handoff Mnemonics

| Tool | Setting | Elements |

|---|---|---|

| ANTICipate | Physician handoff | Administrative, New info, Tasks, Illness, Contingency |

| SIGN-OUT | Physician handoff | Sick/DNR, Ident data, General course, New events, Overall health, Upcoming tasks, Things to watch |

| 5 Ps | Nursing handoff | Patient, Plan, Purpose, Problems, Precautions |

| CUS | Assertive communication | I'm Concerned, I'm Uncomfortable, This is a Safety issue |

Read-Back & Closed-Loop Communication

For verbal or telephone orders and critical test results, the receiver must read back the order and have it confirmed by the sender. This is a Joint Commission National Patient Safety Goal. Closed-loop communication — sender directs a message to a named receiver, receiver acknowledges and confirms action — is a cornerstone of team training in codes and trauma resuscitations.

23 Teach-Back, Health Literacy & Interpreters

Patients who do not understand their diagnosis, medications, or discharge instructions are at dramatically higher risk of readmission, medication error, and poor outcomes. Nearly half of US adults have limited health literacy, and non-English speakers are at particular risk.

Teach-Back Method

The teach-back method asks the patient to explain back, in their own words, what they have been told. It is an evidence-based technique from AHRQ and endorsed by the Joint Commission. Key rules: (1) It is not a test of the patient — it is a test of the clinician's explanation. (2) If the patient cannot explain, re-teach using different words, then re-check. (3) Use for every new diagnosis, medication, and discharge instruction.

"I want to make sure I explained this clearly. Can you tell me in your own words what you're going to do when you get home to take care of your new diabetes?"

Not: "Do you understand?" (closed-ended; patients almost always say yes).

Health Literacy

| Principle | Application |

|---|---|

| Plain language | 6th grade reading level; short sentences; everyday words |

| Limit key messages | 3–5 key points per visit |

| Visual aids | Diagrams, pictograms, videos |

| Ask-Tell-Ask | Ask what patient knows, tell in plain terms, ask what they understood |

| "Brown bag" medication reviews | Patient brings all medications to visit for review |

| Universal precautions approach | Assume all patients may have difficulty understanding |

Language Services & Interpreters

Title VI of the Civil Rights Act and CMS regulations require meaningful language access for patients with limited English proficiency (LEP). Use of ad hoc interpreters (family members, especially children; bilingual staff without training) is discouraged because of higher error rates and confidentiality concerns.

| Interpreter Type | Use |

|---|---|

| In-person professional | Complex discussions, consent, end-of-life, sensitive topics |

| Telephone | Rapid, 24/7 availability; most routine encounters |

| Video remote interpreting (VRI) | Adds visual cues; preferred for ASL and complex discussions |

| Ad hoc (family, bilingual staff) | Emergencies only; not appropriate for consent or sensitive topics |

24 CRM, Briefings & Speak-Up Culture

Crew Resource Management

Crew Resource Management (CRM) originated in commercial aviation after NASA research showed that most airline crashes involved team communication failures rather than pilot skill deficits. CRM principles — flat hierarchy, cross-checking, assertion, shared mental models — have been adapted into healthcare as TeamSTEPPS (Team Strategies and Tools to Enhance Performance and Patient Safety), an AHRQ/DoD curriculum.

TeamSTEPPS Core Components

| Component | Tools |

|---|---|

| Communication | SBAR, call-out, check-back, handoff (I-PASS) |

| Leadership | Briefs, huddles, debriefs |

| Situation monitoring | STEP (Status of patient, Team members, Environment, Progress toward goal); cross-monitoring |

| Mutual support | Task assistance, feedback, advocacy and assertion (CUS, Two-Challenge Rule, DESC script) |

The Two-Challenge Rule

Any team member who believes a decision is unsafe is empowered to voice the concern. If the initial concern is dismissed, the team member must assert it a second time. If still unaddressed, the team member invokes an escalation policy (chain of command). This rule empowers the most junior team member to stop the line when safety is at risk.

CUS Words

The CUS escalation phrase is calibrated to signal escalating concern without being confrontational: I'm Concerned / I'm Uncomfortable / This is a Safety issue. These phrases are recognized by trained teams as an explicit trigger to pause and reassess.

Briefings, Huddles & Debriefings

| Event | Timing | Purpose |

|---|---|---|

| Briefing | Before a shift, surgical case, or procedure | Shared mental model, anticipated issues, role clarity |

| Huddle | Mid-shift or ad hoc | Situational awareness update; adjust plan |

| Debriefing | After a case, code, or adverse event | What went well, what didn't, what to change next time |

Speak-Up Culture & Psychological Safety

Psychological safety (Amy Edmondson) is the shared belief that the team is safe for interpersonal risk-taking — that speaking up about concerns, errors, or questions will not be punished. It is the strongest predictor of team learning and error reporting. The Joint Commission's "Speak Up" campaign explicitly encourages both staff and patients/families to voice concerns.

Safety Culture Assessment

The AHRQ Hospital Survey on Patient Safety Culture (HSOPSC) is the most widely used instrument, measuring dimensions such as teamwork, communication openness, non-punitive response to error, staffing, handoffs, and organizational learning. Repeated measurement benchmarks culture improvement and is linked to HAC and mortality rates.

25 Patient & Family Engagement, Shared Decision-Making

Engaged patients and families are a critical defense layer. They can recognize errors that clinicians miss (wrong medication, unfamiliar rash, a missed detail in the history), and they are more adherent, better informed, and more satisfied when involved in decisions. The Joint Commission's "Speak Up" campaign and PFAC (Patient and Family Advisory Councils) institutionalize this role.

Levels of Engagement

| Level | Activity |

|---|---|

| Information | Patient receives clear information about condition and treatment |

| Consultation | Clinician seeks patient preferences before deciding |

| Partnership | Shared decision-making about the care plan |

| Co-design | Patients and families help redesign systems (PFAC, rounds, policies) |

| Governance | Patient representatives sit on quality and safety committees |

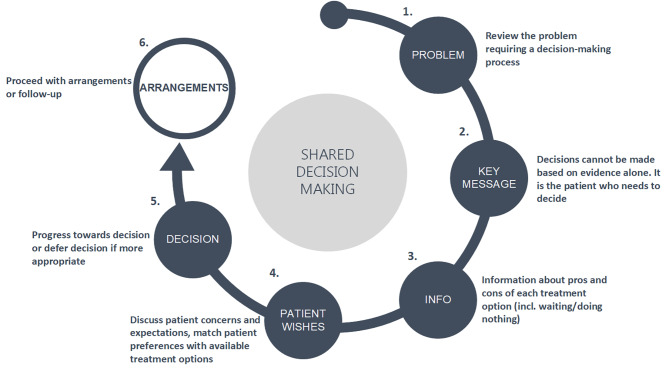

Shared Decision-Making (SDM)

Shared decision-making is the process of integrating clinical evidence with patient values and preferences, particularly for preference-sensitive decisions where more than one reasonable option exists (e.g., PSA screening, mastectomy vs lumpectomy, anticoagulation in AF).

| Step | Activity |

|---|---|

| Choice talk | Make the patient aware that a choice exists |

| Option talk | Compare the options, their benefits, and their harms using plain language or a decision aid |

| Decision talk | Support the patient in arriving at a preference-informed decision |

Patient as Safety Partner

| Action | Example |

|---|---|

| Hand hygiene reminders | Patient asks staff to wash hands before contact |

| Medication verification | Patient knows each medication, its purpose, and its color/shape |

| Identification | Patient confirms name/DOB before any test or medication |

| Site marking | Patient participates in marking the surgical site |

| Questioning | Patient encouraged to ask if something seems different or unfamiliar |

| Rapid response | Some hospitals empower patients/families to call a rapid response (e.g., Condition H) |

26 Error Disclosure, Apology Laws & the Second Victim

Principles of Error Disclosure

Patients have a right to know when a medical error has harmed them. The Joint Commission standard (RI.01.02.01) requires that patients be informed of unanticipated outcomes. Disclosure is ethically mandatory, preserves trust, and — contrary to historical belief — is associated with reduced litigation rates when done well.

| Element | Description |

|---|---|

| Timely | Disclose as soon as facts are known; do not wait for investigation to conclude |

| Honest | Acknowledge what happened in plain language; do not speculate |

| Empathic | Express genuine sorrow for the harm (apology) |

| Responsible | Explain what will be done to investigate and prevent recurrence |

| Ongoing | Maintain communication as investigation proceeds |

| Supportive | Offer emotional support, waiver of costs when appropriate |

CANDOR & Communication-and-Resolution Programs

CANDOR (Communication and Optimal Resolution), developed by AHRQ, is a structured program for responding to adverse events that combines prompt disclosure, investigation, process improvement, and proactive offers of resolution (including compensation when appropriate). Programs at the University of Michigan Health System and the VA have shown that communication-and-resolution approaches decrease litigation, reduce defense costs, and improve patient and clinician satisfaction.

Apology Laws

Most US states have enacted apology laws (sometimes called "I'm Sorry" laws) that make physician expressions of sympathy inadmissible in malpractice litigation. The intent is to remove the chilling effect on open, compassionate communication after adverse events. Laws vary: some protect only expressions of sympathy, while others protect admissions of fault as well.

The Second Victim

Clinicians involved in adverse events frequently experience intense emotional distress, including guilt, anxiety, depression, and burnout. Albert Wu coined the term second victim in 2000 to describe this phenomenon. Unsupported second victims are at higher risk of subsequent errors, substance use, leaving the profession, and suicide.

| Stage (Scott et al.) | Description |

|---|---|

| 1. Chaos and accident response | Discovery of the event; initial response |

| 2. Intrusive reflections | Re-living the event; "what if" thinking |

| 3. Restoring personal integrity | Seeking acceptance from peers and leadership |

| 4. Enduring the inquisition | Investigation, litigation fears, feeling judged |

| 5. Obtaining emotional first aid | Finding a trusted listener |

| 6. Moving on | Dropping out, surviving, or thriving (personal growth) |

Peer Support Programs

Programs such as Johns Hopkins RISE (Resilience In Stressful Events), Brigham and Women's Peer Support, and MITSS (Medically Induced Trauma Support Services) offer confidential, non-judgmental peer counseling for clinicians after adverse events. Availability of peer support within 24–48 hours is an emerging safety-culture benchmark.

27 Reporting Systems & Organizational Learning

An organization cannot improve what it cannot see. Reporting systems — voluntary and mandatory — create visibility into adverse events, near misses, and hazardous conditions so that leadership can act.

Voluntary vs Mandatory Reporting

| Type | Description | Example |

|---|---|---|

| Mandatory (state) | Laws requiring report of serious adverse events | State adverse event reporting (e.g., Minnesota's NQF-based reporting) |

| Mandatory (federal) | CMS conditions of participation; FDA device/drug reports (MedWatch); MDR for devices | Never events in inpatient settings; ADRs from serious drug events |

| Voluntary (external) | De-identified or anonymous national databases | Joint Commission sentinel event database; ISMP MERP; AHRQ Patient Safety Organizations (PSOs) |

| Voluntary (internal) | Hospital incident reporting (e.g., RL, Midas) | Staff-reported falls, medication errors, near-misses |

Patient Safety Organizations (PSOs)

The Patient Safety and Quality Improvement Act of 2005 created federal privilege and confidentiality protections for data reported to certified PSOs. This allows hospitals to share safety data, analyses, and corrective actions without fear of discovery in litigation, fostering broader learning across the healthcare system.

Culture of Reporting

Reporting rates vary enormously between units and between organizations, reflecting culture more than actual event rates. Characteristics of high-reporting cultures include: ease of reporting (one-click), feedback to the reporter ("closing the loop"), visible action, absence of punishment for good-faith reporting, and leadership attention. Low reporting is not a sign of safety — it is a sign of blindness.

Morbidity & Mortality (M&M) Conferences

M&M conferences are the traditional physician forum for learning from adverse events. Historically they focused on individual blame; modern M&Ms use systems analysis, blameless language, and structured frameworks (such as the AHRQ M&M case studies) to identify and share lessons.

Organizational Learning

| Mechanism | Description |

|---|---|

| Patient safety dashboard | Real-time, visible metrics at unit and enterprise level |

| Daily safety huddle | Leadership briefing on prior 24h events and next 24h risks |

| WalkRounds (executive rounding) | Senior leaders visit units and discuss safety with staff |

| Great Catches recognition | Publicly celebrating near-miss reports |

| Shared learning forums | Cross-unit and cross-hospital sharing of lessons learned |

| After-action reviews | Structured review after codes, emergencies, or drills |

28 Regulatory Bodies, References & High-Yield Review

Major US & International Bodies

| Organization | Role |

|---|---|

| The Joint Commission (TJC) | Accreditation; National Patient Safety Goals; sentinel event database; Universal Protocol |

| CMS | Conditions of participation; HAC non-payment; value-based purchasing; HCAHPS; public reporting |

| AHRQ (Agency for Healthcare Research and Quality) | Research funding; TeamSTEPPS; HSOPSC; CANDOR; PSNet; WebM&M; Patient Safety Network |

| IHI (Institute for Healthcare Improvement) | Model for Improvement; Triple/Quadruple Aim; 100K Lives campaign; Open School |

| NQF (National Quality Forum) | Serious reportable events ("never events"); endorsed quality measures |

| ISMP (Institute for Safe Medication Practices) | High-alert medication list; LASA list; "do not use" abbreviations; MERP |

| NPSF / IHI Lucian Leape Institute | Safety research and advocacy (merged with IHI in 2017) |

| FDA | MedWatch; drug and device adverse event reporting; REMS |

| OSHA | Worker safety standards (bloodborne pathogens, surgical smoke) |

| CDC / NHSN | Healthcare-associated infection surveillance and definitions |

| WHO | Global Patient Safety Challenges; Surgical Safety Checklist; World Patient Safety Day |

| Leapfrog Group | Employer-driven public hospital safety grades |

Joint Commission National Patient Safety Goals (Recurring Themes)

| Goal | Requirement |

|---|---|

| Identify patients correctly | Use at least two identifiers (e.g., name + DOB) |

| Improve staff communication | Report critical test results on time; read-back |

| Use medications safely | Label all medications/containers; reconcile on transitions; manage anticoagulants |

| Use alarms safely | Address alarm fatigue; prioritize clinical alarms |

| Prevent infection | Hand hygiene; CLABSI, CAUTI, SSI, MDRO prevention |

| Identify patient safety risks | Suicide risk screening in behavioral health |

| Prevent mistakes in surgery | Universal Protocol (pre-procedure verification, site marking, time-out) |

Key References & Tools

| Reference | Author / Organization |

|---|---|

| To Err Is Human (1999) | IOM / National Academies |

| Crossing the Quality Chasm (2001) | IOM |

| Improving Diagnosis in Health Care (2015) | National Academies |

| Free from Harm (2015) | NPSF |

| RCA2 (2015) | NPSF |

| The Checklist Manifesto (Gawande) | Popular book on checklists in medicine |

| Managing the Risks of Organizational Accidents (Reason) | Foundational theory of active/latent error and Swiss Cheese |

| AHRQ PSNet / WebM&M | Case-based safety education |

| IHI Open School | Free QI and safety curriculum |

| TeamSTEPPS 3.0 (2023) | AHRQ teamwork and communication curriculum |

Comprehensive Error-Type Summary Table